IoT Device Battery Life: What Datasheets Don’t Tell You and the Questions Decision-Makers Should Ask

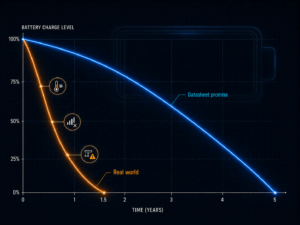

The datasheet isn’t lying – but it describes a world that doesn’t exist in practice. Anyone procuring IoT trackers or sensors should know the five factors that determine real-world battery life.

Key Takeaways

- IoT battery life figures on datasheets are measured under laboratory conditions – room temperature, perfect signal, minimal transmission frequency – and often differ significantly from real-world performance.

- Five factors determine the actual battery life of IoT devices: transmission interval, network quality and retry behavior, sleep modes (PSM and eDRX), operating temperature and battery chemistry, and the choice of communication protocol (MQTT, CoAP, or LwM2M).

- Instead of asking about battery life, decision-makers should request a power profile from the vendor – a current consumption curve under real operating conditions – and specifically ask about Exponential Backoff support, PSM/eDRX availability, and configurable transmission intervals.

In this article

A Promise That Holds Up in the Lab

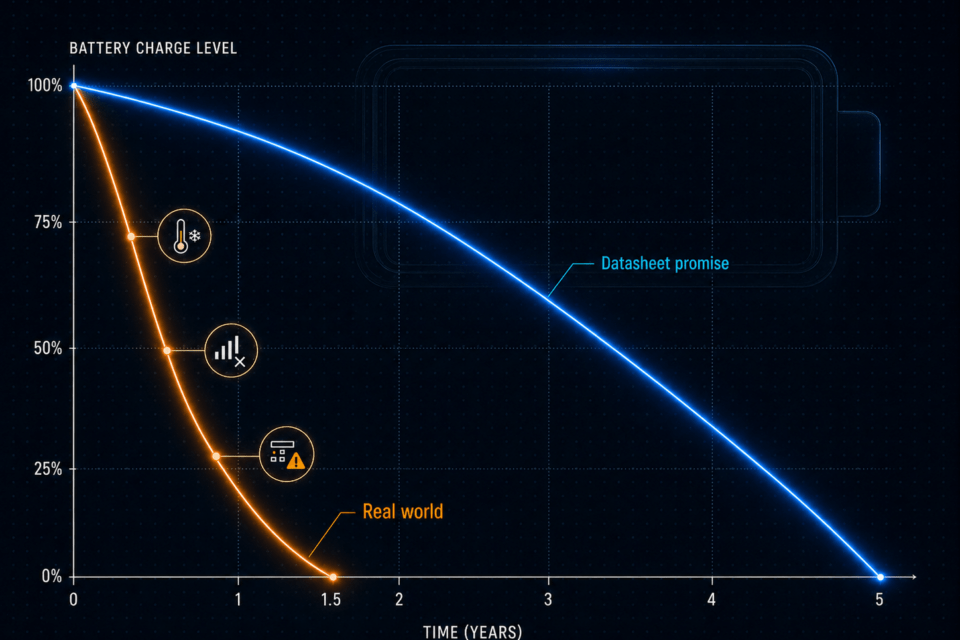

Imagine buying 300 trackers for your warehouse equipment, each with a promised battery life of five years. Eighteen months later, the first devices go silent. The manufacturer isn’t technically at fault. The datasheet was accurate – just measured under conditions that have little in common with your warehouse, your cold storage zones, and your cellular network. IoT battery life is one of the most widely misunderstood promises in the industry.

That is the real problem. Battery life in IoT datasheets is calculated under ideal conditions: room temperature around 25 degrees Celsius, strong cellular signal, one data packet per day, device otherwise in deep sleep. A world where trackers sit on a lab bench – not on a forklift shuttling between an outdoor depot and a freezer zone.

Lever 1: Optimizing the Transmission Interval – the Most Direct Factor in IoT Battery Life

How often a device transmits its location or sensor reading is the most direct factor in energy consumption. Every transmission has a cost: requesting a GPS fix, waking the cellular module, establishing a connection, sending data, tearing the connection down again. It sounds like milliseconds, but adds up considerably over months.

A tracker transmitting every 5 minutes does not consume six times the energy of one transmitting every 30 minutes. The energy math is less favorable than that, because every connection incurs a startup current that weighs disproportionately heavily. In practice, switching from 5- to 30-minute intervals can multiply battery life – without a single hardware change.

The critical question before purchasing is therefore: Is the transmission interval freely configurable, and what interval does the datasheet use as its basis for the battery life figure? If it states “1 transmission per 24 hours” but your use case requires tracking every 15 minutes, you can divide the promised five years by four.

Lever 2: Poor Cellular Signal as a Battery Killer – Retry Behavior and Exponential Backoff

Cellular modules in IoT devices are patient optimists. If a device can’t find a sufficient network, it simply tries again. And again. And again. Every attempt draws current – often significantly more than a successful connection, because the module increases its transmission power in poor reception conditions.

This isn’t a design flaw – it’s physics. The problem arises when the firmware has no intelligent throttle built in. Well-implemented firmware uses a mechanism called Exponential Backoff: after each failed attempt, the waiting time before the next try doubles. Weaker implementations keep hammering away every few seconds until the battery is dead.

For procurement, this means: ask explicitly about the firmware’s retry behavior. How does the device behave under persistently poor signal? Is there an upper limit on attempts? Can the device switch to deep sleep when connectivity is lost, rather than searching indefinitely?

What Is Exponential Backoff?

- When an IoT device cannot establish a network connection, it tries again. The question is: how quickly? Basic firmware repeats the attempt at fixed intervals – say, every 30 seconds – until a connection is made or the battery runs out.

- Exponential Backoff is a smarter approach: after each failed attempt, the waiting time before the next doubles. First attempt fails: wait 30 seconds. Second fails: wait 60 seconds. Then 2 minutes, 4 minutes, 8 minutes. Once a configured maximum is reached – say, one hour – the interval stays constant.

- This saves energy because the modem sleeps during waiting periods instead of transmitting. It also reduces network load, because thousands of devices don’t all try to reconnect simultaneously at second intervals after an outage.

- Relevant for decision-makers: Whether a device supports Exponential Backoff rarely appears on the datasheet. It is a firmware property that must be explicitly confirmed with the manufacturer.

Lever 3: PSM and eDRX – How NB-IoT and LTE-M Extend Battery Life

Anyone operating IoT devices on NB-IoT or LTE-M – the two dominant cellular standards for low-power IoT applications – has access to two standardized energy-saving mechanisms: PSM and eDRX.

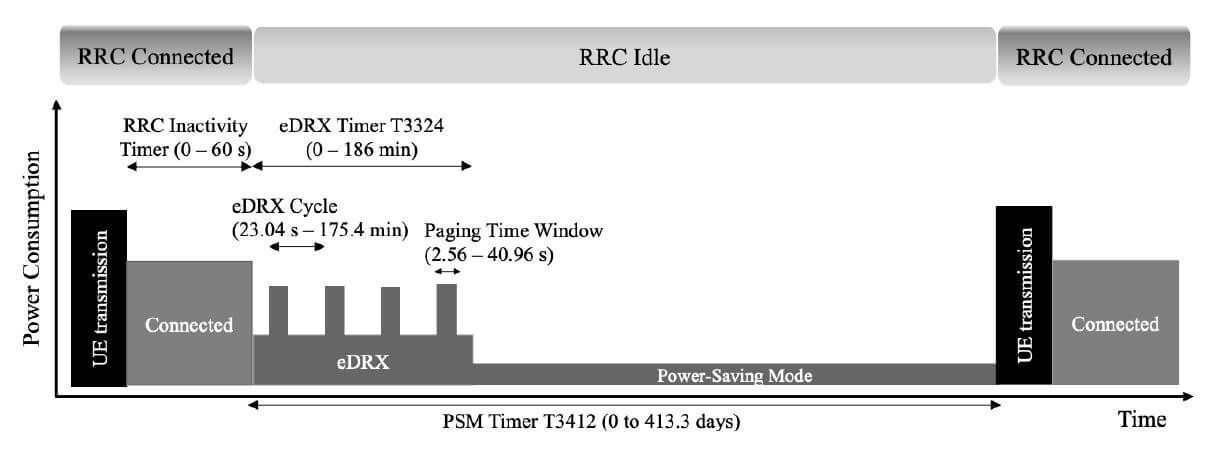

PSM stands for Power Saving Mode and works like deep sleep. The device tells the network when it will wake up, and is completely disconnected from the network until then. Current draw in PSM is minimal. NB-IoT devices can sleep in PSM for up to 310 hours before briefly checking in and going back to sleep. For applications that only need to deliver data once a day or once an hour, this is ideal.

eDRX, Extended Discontinuous Reception, is the counterpart for devices that need to remain somewhat more reachable. Rather than continuously listening for messages, the device also sleeps here – but wakes at configurable intervals to check whether a message is waiting. Lighter sleep, less energy saving than PSM, but more responsiveness.

Connection states and energy consumption of an IoT device with eDRX and PSM under NB-IoT. Source: “PSM and eDRX”, 1NCE

Telit describes how an LTE-M device that transmits once daily and wakes briefly every ten minutes can achieve a runtime of approximately 4.7 years on two standard AA batteries. That is not a lab scenario – it is a realistic figure for many industrial use cases, provided PSM and eDRX are correctly configured.

The catch: both modes must be supported by the network operator and activated on the device. In practice, this is far from guaranteed. Ask your network operator explicitly whether PSM and eDRX are available on your network before purchasing hardware based on that assumption.

Lever 4: Temperature Effects on IoT Batteries – Li-Ion vs. Li-SOCl₂

Lithium-ion batteries, as found in most compact IoT devices, prefer moderate conditions. At minus 20 degrees Celsius, they lose 20 to 40 percent of their capacity compared to room temperature, according to industry experts. A device with 10,000 mAh on the datasheet effectively has only 6,000 to 8,000 mAh available under deep-freeze conditions.

For applications in cold storage, outdoor environments in northern European climates, or supply chains with significant temperature fluctuations, Lithium Thionyl Chloride cells – Li-SOCl₂ for short – are the recommended alternative. This battery chemistry is considerably more tolerant of cold and is widely used in industrial IoT deployments for exactly these scenarios.

As Qoitech notes in an analysis of battery datasheets, there is also a meaningful difference between continuous discharge and pulse discharge that most datasheets do not capture. IoT devices discharge batteries in pulses, not continuously. Some battery types handle this considerably better than others.

Lever 5: MQTT, CoAP, and LwM2M – Which Protocol Is Easiest on the IoT Battery?

PSM and eDRX determine how often a device talks to the network. What actually travels over the connection during each exchange is determined by the communication protocol – and here, too, the differences are substantial.

MQTT, the Message Queuing Telemetry Transport protocol, has been the de facto standard for IoT communication since 1999 and is deeply embedded in cloud platforms such as AWS and Azure. It operates on a publish/subscribe model: devices publish messages to a central broker, which forwards them to all subscribers. MQTT runs over TCP, a connection-oriented transport protocol that confirms every delivery. Reliable – but not lean.

CoAP, the Constrained Application Protocol, was developed as a lightweight alternative for resource-constrained devices. It uses UDP, a connectionless protocol that sends data packets without delivery confirmation. This significantly reduces overhead – the volume of data transmitted beyond the actual payload. In a practical test by 1NCE, documented in their blog series on lean protocols in LPWAN, switching from MQTT to CoAP reduced energy consumption by 14 to 48 percent depending on packet size.

LwM2M, Lightweight Machine-to-Machine, builds on CoAP and extends it with standardized device management functions: remote firmware updates, configuration changes, status monitoring. It is considered a modern successor to MQTT for professional, scalable IoT fleets – though it is not yet fully supported by all major cloud platforms.

For decision-makers, this means: the protocol question is not purely a developer decision. It directly affects battery life and therefore operating costs across the entire lifetime of a fleet. Ask vendors which protocol the device uses, whether it is configurable, and whether CoAP or LwM2M is available as an option.

Assessing IoT Battery Life Realistically: The Right Questions to Ask Your Vendor

The battery life figure on a datasheet is a starting point, not a guarantee. Anyone procuring hardware for a multi-year fleet deployment should ask at minimum these questions:

At what transmission interval was the battery life figure calculated? Does the firmware support Exponential Backoff when connectivity fails? Are PSM and eDRX implemented and configurable? What battery chemistry is used, and what temperature range is it rated for? Which communication protocol does the device use, and can it be switched to CoAP or LwM2M?

And then one more question that catches many vendors off guard: “Can you show us a power profile?” A power profile is a curve showing the device’s current consumption over a representative period – not just peak draw during transmission, but also standby current during sleep, the startup phase of a GPS fix, and behavior under poor network conditions. Serious manufacturers have this data. Those who can’t or won’t provide it are already telling you something.

Battery life is – and this is the real point of this entire discussion – not a hardware specification. It is a system outcome. Hardware, firmware, network connectivity, and operating environment all interact. The datasheet describes only one of these five factors, and even then under conditions that often have little in common with your actual deployment.