Nvidia and ARM bring AI into the IoT

A collaboration between Arm and Nvidia should enable “deep learning” and AI (artificial intelligence) on Internet-enabled devices.

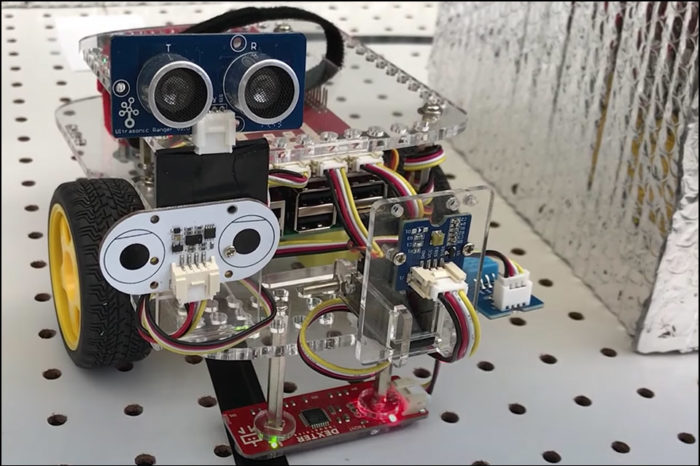

Nvidia and ARM have announced a collaboration to integrate Nvdia’s Deep Learning Accelerator (Nvdla) architecture into ARM’s Project Trillium machine learning platform. This technology fusion is designed to make it easier for manufacturers of IoT devices to integrate artificial intelligence (AI) into their designs and help them to manufacture and offer intelligent products at low prices.

Nvdla is based on Nvidia’s “Xavier” autonomous machine system (already used in concept vehicles for autonomous driving at Volkswagen, among others). Nvdla is not only supported by the company’s development tools, but also the upcoming versions of TensorRT, a programmable “Deep Learning Accelerator”. According to Nvidia, the open source design in Nvdla should make it possible to regularly add new functions.

While cloud IoT devices can provide access to extensive AI and machine learning capabilities, the fragility of the necessary network connectivity, overall device security and the requirement to process large amounts of sensor data in real time means that IoT device manufacturers will increasingly rely on edge computing – that is, part of the data processing will already be performed on the IoT device itself.

More performance in IoT

“Accelerating AI at the edge is critical in enabling Arm’s vision of connecting a trillion IoT devices.” – Rene Haas (Vice President ARM)

ARM and Nvida stated that integrating Nvdla with Project Trillium will provide developers with maximum performance by leveraging the flexibility and scalability of ARM for a wide range of IoT devices.

“This is a win/win for IoT, mobile and embedded chip companies looking to design accelerated AI inferencing solutions” – Karl Freund (analyst at Moor Insights and Strategy.)

An exciting cooperation that could offer many possibilities for smart homes, building automation and self-sufficient systems in a wide variety of application scenarios.