The End of Cheap AI: And What It Means for Connected Devices

OpenAI and Anthropic are battling capacity shortages, rising prices and too much downtime. For teams that have built smart devices on cloud AI, this is becoming a real operational problem – and a reason to ask a question that has been overdue for a while: how dependent do we actually want to be?

Key Takeaways

- OpenAI missed its internal user targets for 2025 according to the Wall Street Journal, and is struggling with slowing revenue growth, while Anthropic raises prices and shifts business customers from flat-rate to consumption-based billing.

- IoT projects that rely on external cloud services for AI processing carry a risk that has long been underestimated: outages, higher costs, and no control over their own infrastructure.

- Running AI directly on the device, rather than querying the cloud every time, is moving from niche to mainstream in 2026 – not just for technical reasons, but because the economics are shifting.

A Crack in the Foundation

Anyone reading the Wall Street Journal’s report on OpenAI (€) these days gets the picture of a company beginning to buckle under the weight of its own ambitions. The goal of reaching one billion weekly active ChatGPT users by the end of 2025 was missed. Annual revenue fell short of internal targets. CFO Sarah Friar reportedly expressed doubts internally about whether the company’s data center commitments of around $600 billion would remain financeable.

That sounds like a story for investors. For IoT developers, it is more than that.

At the same time, Anthropic – the strongest competitor in the enterprise segment – is showing similar signs of strain. Through early April 2026, Anthropic’s service achieved an availability rate of 98.95 percent. That sounds reasonable, but it is not. Extrapolated over a full year, that works out to roughly 90 hours of downtime, compared to the 50 minutes that professional cloud providers guarantee as their standard. Retool founder David Hsu switched from Anthropic to OpenAI for exactly this reason, according to the Wall Street Journal. Whether he jumped from the frying pan into the fire remains to be seen.

Who Has Been Picking Up the Tab

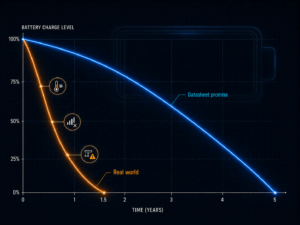

AI services have been remarkably cheap in recent years. That was not an accident – it was a deliberate choice: investors subsidised low prices to acquire users as fast as possible. 404media draws a fitting comparison to Uber in its early years, when every ride was offered below cost. At some point, that ends. For AI services, that point appears to have arrived.

Anthropic has already moved its business customers to consumption-based billing. Instead of a fixed monthly fee, they now pay based on actual usage. At the same time, the cost of computing capacity has risen sharply: rental prices for modern AI chips increased by 48 percent in just two months according to industry reports, and another major provider raised its prices by more than 20 percent. GitHub also restricted access to its AI coding tool Copilot and temporarily paused new signups.

Anyone who has been budgeting AI costs based on last year’s prices is sitting on a risk.

Why Connected Devices Feel This First

Connected systems have an inconvenient characteristic: they run continuously. There is no user who briefly waits, no error message to click away. A sensor that sends readings every 30 seconds and forwards them to a cloud service for analysis has no fallback concept for when that service suddenly becomes unavailable or costs ten times as much.

The dependency has grown quietly in many projects, without ever being named as a risk. Cloud AI was affordable, well-documented and became more capable year after year without requiring any effort from the teams using it. That was convenient. Companies running AI-powered operations on this basis today are doing so at prices that are structurally unsustainable – and may one day find that their costs have multiplied or that access has simply been restricted.

For IoT projects, three scenarios are particularly precarious: systems that need to respond in real time and cannot afford the detour through the cloud; systems where an AI service outage shuts down operations entirely; and projects where costs grow disproportionately as the number of devices scales up.

AI on the Device: From Experiment to Standard

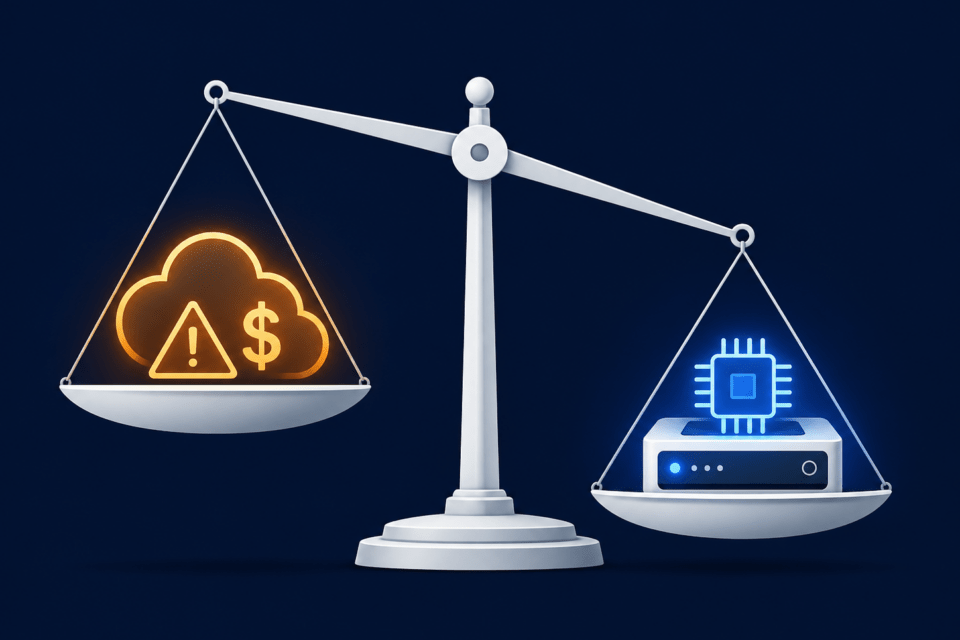

The response to cloud dependency is not new, but it is being taken more seriously than ever: running AI processing directly on the device or in a local network node, rather than querying an external service every time. This saves not only money and latency – the delay between a request and its response – but also makes systems less dependent on the reliability of external providers.

According to an analysis by IoT Tech News, devices with embedded AI are leaving the pilot project phase in 2026 and appearing in mainstream product portfolios. The timing is no coincidence. The market research firm IDC describes the trend as structural: AI data centres are claiming a growing share of globally available chip production, driving up costs for everyone – including manufacturers of small IoT hardware.

Dell architect Daniel Cummins cites Dell CTO John Roese in a blog post from January 2026, who argues that running models locally will become the norm to insulate organisations from external disruptions. For time-critical applications – machine control, medical devices, autonomous systems – cloud dependency was never a viable option to begin with. But the argument increasingly holds for less critical projects too, once the actual costs and risks are honestly assessed.

Three Questions Worth Asking Now

This is not a call to immediately tear out every cloud AI integration. For compute-intensive tasks, infrequent processing jobs or training new models, external services remain the right choice. But every team building AI into connected products should now honestly answer three questions.

- What happens to my system if the AI service goes down for two hours? If the answer is unsatisfying, that is an architecture problem, not an operations problem.

- How do my costs evolve if ten times as many devices use the service as today? Many projects were costed at prices that may not hold.

- Which AI tasks genuinely need a powerful external service, and which could a smaller, locally running model handle? For many typical IoT tasks – anomaly detection, classification, pattern recognition – lean, specialised on-device models are entirely sufficient.

The current crisis among the major AI providers is not a temporary bottleneck. It is the result of a pricing strategy built on rapid growth rather than long-term sustainability. For anyone building smart devices, this is a good moment to examine the dependencies that have quietly accumulated over the past few years of comfortable, cheap AI.