Industrial IoT: How intelligent sensors help speeding up complex ecosystems

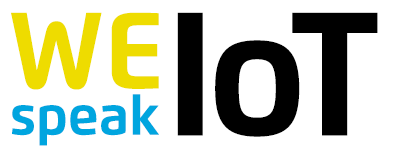

Imagine an industrial environment: a large production hall, where complex machines work around the clock, interact with each other, depend on each other. Now, imagine an IIoT ecosystem whose task it is to monitor every single detail within the production process: positions, speed, temperature, pressure, energy consumption, filling levels – all the metrics that play a crucial role for an efficient process. To reduce idling, prevent failure or simply save resources, hundreds, if not thousands of sensors are needed. The more the better. But with only a centralized intelligence, Industrial IoT (IIoT) could quickly become a bumpy ride.

Operational efficiency is one of the key attractions of the IIoT. By introducing automation and flexible production techniques, manufacturers could boost their productivity by up to 30 percent. [1] To boost efficiency, data is needed. Data of every single detail about what’s going on in the production process.

When searching for ways to deal with huge amounts of data that are coming from IIoT sensors, engineers are facing some, if not many challenges. But one of the most important is probably time. Time that’s wasted while huge amounts of data are being transferred. Time that’s lost while this data is being analyzed. And time that passes by, until a command can be sent back to the system.

Industrial IoT produces huge amounts of data

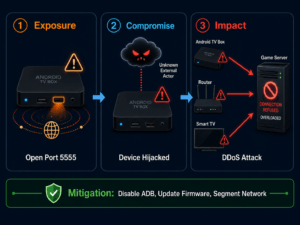

A simple way of collecting data is to attach all kinds of sensors to all kinds of machines. Then have this data sent to one or many nearby gateways and from there to a central unit; in most cases, a platform in the cloud. In this centralized entity lies a lot of processing power to sort and analyze the received data. Once processed, commands can be sent back to the machines, to keep the production process running. At the same time, other tasks are being executed, for example, the ordering of supplies. In this setup, where the intelligence lies in the cloud, the sensors themselves can be kept cheap and simple.

The sticking point is: When all the data is being processed within the cloud, the raw metrics need be transferred. And there can be a lot of it. But in a harsh industrial environment, technologies like WiFi, that we know from home, might not be sufficient enough. Yes, it delivers high bandwidth, but the range is rather limited. Especially in an environment that is packed with all kinds of machines made of steel and other metals. So other solutions have to be implemented. Cable? Well, sensors with cables are even cheaper, but it might turn out complex, if not impossible to wire the machines in a sound manner. Especially in areas with many moving parts. So far, radio technology like 868-MHz has proven to be solid and reliable. But the range comes with a price: bandwidth is scarce. The solution: If you cannot increase the bandwidth, you have to decrease the amount of data. How? Move some of the intelligence to the edge of the network.

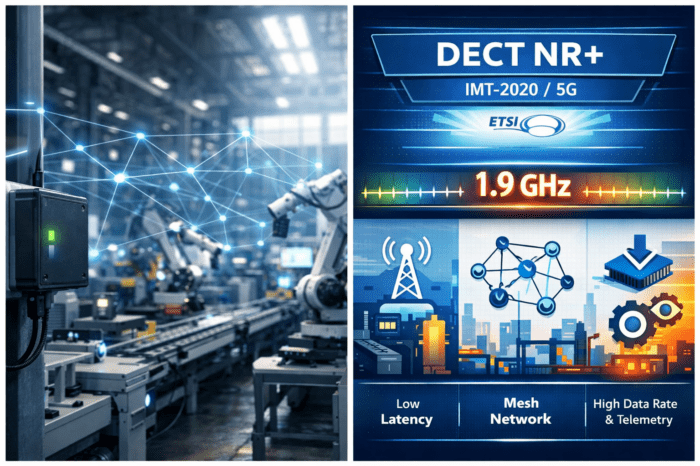

Interpretation of metrics starts on the sensors

Sensors, equipped with small microprocessors that are able to fulfill certain calculations, could pre-process the data. These intelligent sensors would stop sending every little bit to the cloud. They would collect data from the machine, but instead of just forwarding it the sensors would already interpret the metrics at the edge of the network, right where they have been detected. In that kind of setup, first decisions could be made, even before the cloud gets involved. With better and cheaper microprocessors coming on the market, the goal of cheap but intelligent sensors is within reach.

Smart sensors could drastically reduce the amount of data that goes into the cloud. If action is needed, the system could react on its own. Even the level of security is higher if there is no centralized entity having the last word about each single sensor and actuator. The cloud remains important for analysis and monitoring, but the main decisions are being made on the edge, right where the machines are.

You might also like to read:

Smart technology for old machinery: the challenge of retrofitting a factory